The risks are immense, but here’s how you can tackle AI challenges head on

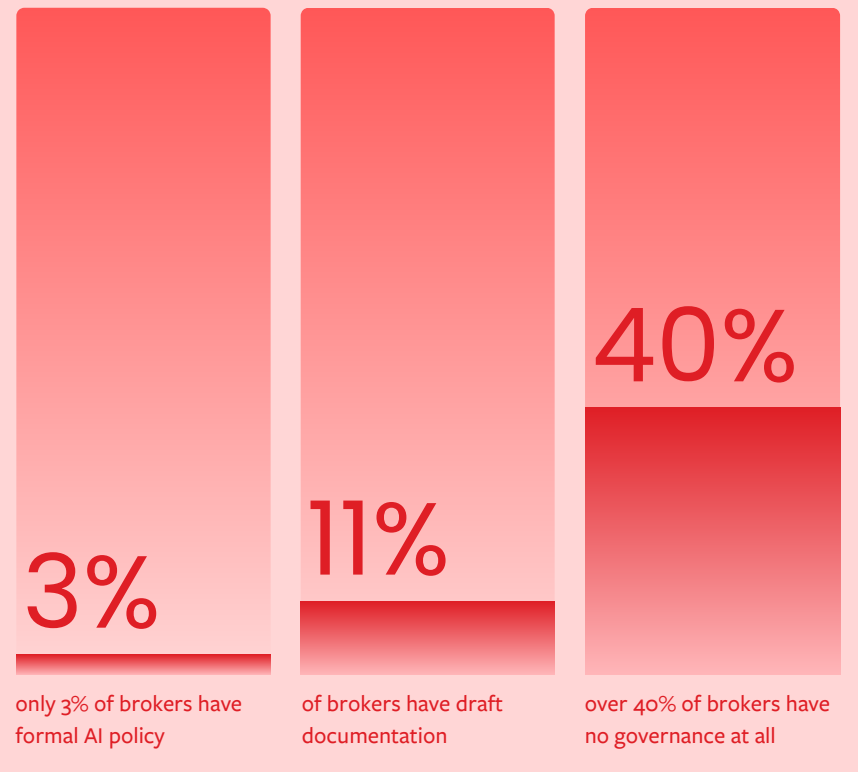

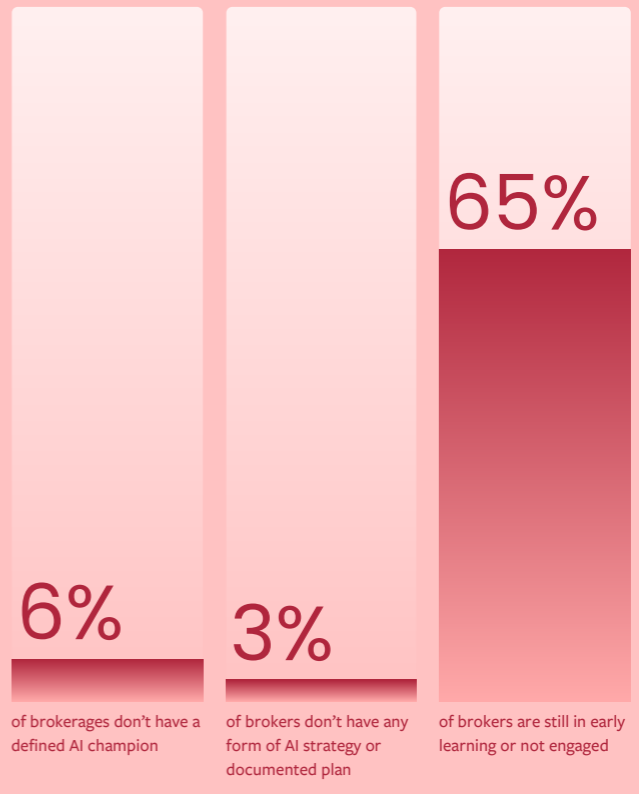

A new report from mortgage aggregator Connective reveals that while 86% of brokers believe AI will be essential or helpful to staying competitive over the next two years, 65% have no clear AI strategy or documented plan, and more than 40% have no governance framework in place.

While brokers are experimenting with tools like ChatGPT and Microsoft Copilot, few have thought through the implications for privacy, compliance, or client trust.

The report identifies five pillars of AI readiness: strategy and mindset; leadership and capability; tools and skills; data and systems, and governance and responsible AI.

Brokers scored highest on strategy and mindset – they understand AI matters – but fell short on nearly everything else. Only 3% of brokers have their AI tools embedded into their workflows, and the same percentage have a formal AI policy in place.

The data and systems pillar emerged as the weakest link. Nearly half of brokers said their AI tools are disconnected from their core systems, while 31% admitted their data is unclear or inaccessible. "Our systems aren't connected, so AI can't go far," one broker said. Another described the problem more colorfully: "Data is everywhere and nowhere."

To address these issues, the Connective report suggests brokers should focus on:

-

Ensuring safe handling of client information with appropriate security guardrails in place

-

Strengthening data hygiene and structure it so AI can read it

-

Starting with simple, low-risk data workflows with human intervention points for quality assurance

-

Aligning processes to clear outcomes before adding automation

The governance gap is also concerning. Only 3% of brokers have a formal AI policy, and 11% have draft documentation. Over 40% have no governance at all, exposing them to privacy breaches, inaccurate information, inconsistent advice, and reputational damage. "I use ChatGPT everyday, but I'm not sure I'm using it well," one broker admitted.

The dangers involved

The regulatory landscape is evolving quickly. The Australian Securities and Investments Commission (ASIC) has raised concerns about quality of advice, record-keeping and data handling in AI systems. The Privacy Act 1988 is undergoing major reforms in 2026 that will increase requirements around transparency, consent, and data use.

Meanwhile, the Australian Prudential Regulation Authority (APRA) is shaping lender-side standards around data, risk, and operational resilience.

Read more: Mutual banks warned against untamed AI uptake

“Regulators are moving quickly, but existing laws remain technology neutral,” said Connective chief executive Glenn Lees (pictured). “Your obligations around consumer protection, disclosure, privacy and director duties apply to AI as they do to any other system. Responsible use isn’t something to be deferred until new rules arrive. It needs to be embedded from day one.”

Yet brokers are pressing forward without guardrails. Most rely on free or basic versions of AI tools, which offer limited privacy protections and no enterprise-grade security. Few have documented use cases, clearly identified processes they can use AI for, or allocated time to learn. "Someone in the business is playing with AI, but nothing formalised," one broker said. "I don't know where to start,” said another.

There is a pronounced skills gap. While 37% of brokers use AI regularly and confidently, another 37% are only experimenting occasionally, and 14% don't use AI tools at all. Prompting confidence varies dramatically. "Prompting is hit or miss – sometimes great, sometimes useless," one broker said.

To address this, the Connective report recommends:

-

Improving prompting capability using tools’ best practice guides

-

Choosing appropriate paid plans to match privacy and capability appetite

-

Building confidence through hands-on practice, including consulting tools’ learning resources and ask your broker community what works for them

-

Standardising two to three repeatable workflows using the tools you’re comfortable using

For Connective’s part, it has developed an AI-enabled platform for brokers and is offering resources like AI Guardrails for Brokers and a Responsible Use of AI Policy template.

Lees emphasised that Connective's approach has been measured. "We test and refine internally first. We focus on solving real broker pain points. We build governance and risk controls up front. That's how we design technology brokers can trust and regulators respect," he explained.

The report draws on a national survey of more than 300 Australian brokers across mortgage, commercial, and asset finance lending, including both Connective members and non-members. The research aligns with findings from credible bodies like the National AI Centre, CSIRO Data61, Cisco, Salesforce, and Gartner.

Remaining competitive

Brokers who ignore these foundational elements risk falling behind.

“Successful AI adoption depends on getting the fundamentals right,” said Lees. “This requires a clear strategy, the right systems and infrastructure, governance frameworks and a culture that prioritises experimentation and safe use.

“With these foundations in place, AI becomes a genuinely useful and sustainable tool. It enhances brokers’ work by automating tasks and streamlining processes, allowing them to focus more on clients and deliver better outcomes while maintaining the compliance and trust that define the broker channel.”

It also means addressing the data problem. Brokers need to strengthen data hygiene, align processes to clear outcomes before adding automation, and ensure safe handling of client information with appropriate security guardrails. Starting with simple, low-risk data workflows with human intervention points for quality assurance is the prudent path.

The report makes clear that brokers who win in the next two to three years will be those who can use AI safely, confidently, and consistently. But getting there requires more than enthusiasm. It requires structure, governance, and a clear-eyed view of the risks.

Brokers ready to move beyond vague AI experimentation should tighten their focus on a few concrete steps. The Connective report recommends:

-

Blocking regular time for AI learning or exploration

-

Building a simple AI adoption rhythm by prioritising time-intensive work, repetitive tasks and friction points

-

Nominating an AI champion for accountability

-

Documenting early use cases and goals, however small

“Innovation and prudence are not opposites,” said Lees. “They are the dual engines of sustainable growth. We hope this research helps you understand where you stand across the critical pillars that underpin AI adoption, and steer both with confidence.”